From Collapse to Construction: Building Event-Driven Systems That Actually Work

Where We've Been

Part 1 exposed the delay problem: Your traditional ERP→ETL→Warehouse→BI stack takes 3 days to answer simple questions. By the time you get the answer, the opportunity is gone.

Part 2 revealed the source: ERP's two ancestral curses (Customization Chaos + Thousand-Table Labyrinth) force data teams to excavate instead of analyze.

Now comes the hard question: What do we build instead?

The Honest Starting Point

Let's be clear about what we're NOT doing:

❌ We're not "eliminating data warehouses" (too evangelical)

❌ We're not claiming "AI solves everything" (too magical)

❌ We're not proposing a rip-and-replace (too risky)

What we ARE doing:

✅ Building a parallel system that handles 80% of business questions faster

✅ Using proven technologies (Kafka, PostgreSQL, LLMs) in a new way

✅ Starting with one use case, proving value, then expanding

✅ Keeping your existing infrastructure running while we transition

This is engineering, not revolution.

The Core Insight: Events, Not States

The fundamental problem with ERP→Warehouse architecture: It stores STATES (final values) instead of EVENTS (what actually happened).

When your ERP records a sale, it stores:

Order ID: 12345

Customer ID: CUST_789

Amount: $500

Date: 2025-01-15

What it DOESN'T store:

Customer browsed 7 products before buying

Added item to cart, removed it, added it back

Hesitated at checkout for 3 minutes

Applied a discount code

Checkout page loaded slowly (4 seconds)

All that context is LOST.

And that context is exactly what you need to answer "why did this happen?"

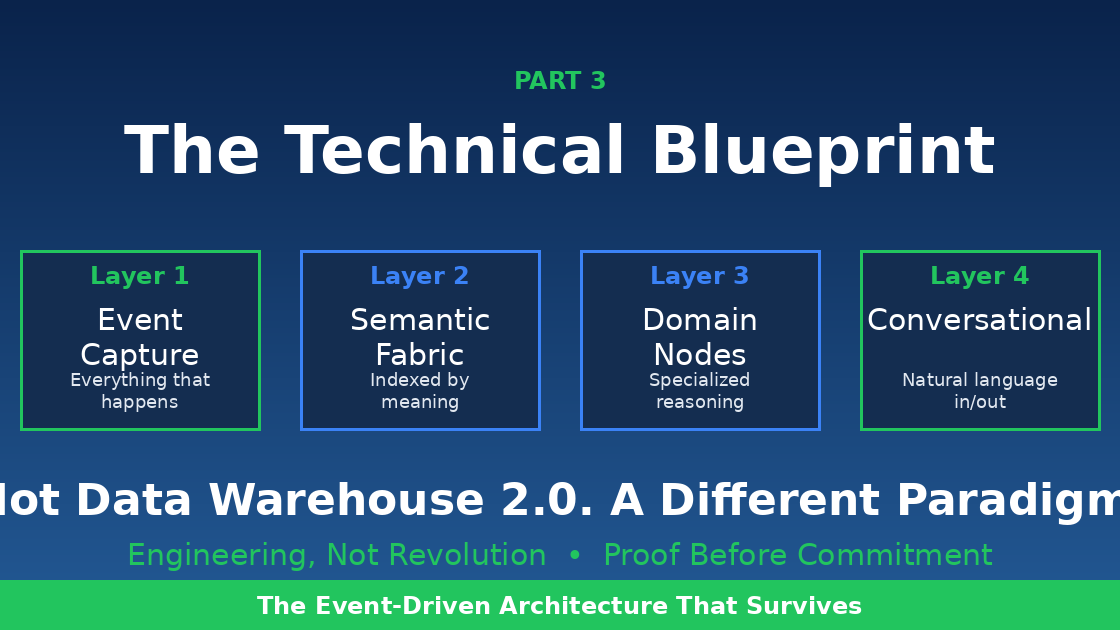

The New Architecture: Event-Driven Intelligence

Here's what we're building:

Let's break down each layer with actual technical details, not buzzwords.

Layer 1: Event Capture (The Foundation)

What Is An Event?

An event is an immutable record of something that happened, with full context.

Example Event Schema:

Key Properties of Events

Immutable Once written, never changed. To correct an error, you write a new event (like accounting).

Self-Contained Every event has all the context it needs. No need to join 12 tables to understand it.

Causally Linked Every event knows what caused it and what it might trigger.

Semantically Indexed Each event has a vector embedding that captures its meaning, enabling semantic search.

Layer 2: Semantic Event Fabric (The Smart Storage)

This is where we store and index events in a way that makes them queryable by meaning, not just by field names.

Technical Components

Component 1: Event Store (PostgreSQL with TimescaleDB)

Why PostgreSQL?

Battle-tested reliability

Native JSON support for flexible event payloads

TimescaleDB extension for time-series optimization

pgvector extension for semantic search

ACID guarantees (unlike some NoSQL solutions)

Schema:

Component 2: Event Bus (Apache Kafka or Redpanda)

Why Kafka/Redpanda?

Handles millions of events per second

Guaranteed ordering within partitions

Replay capability (reprocess events if needed)

Multiple consumers can read same stream

Topic Structure:

Component 3: Stream Processors (Kafka Streams or Flink)

Real-time enrichment and aggregation:

Layer 3: Domain Reasoning Nodes (The Intelligence)

These are specialized query engines for specific business domains. Not generic AI—focused, deterministic processors with LLM-enhanced reasoning.

Anatomy of a Domain Reasoning Node

Example: Customer Behavior Node

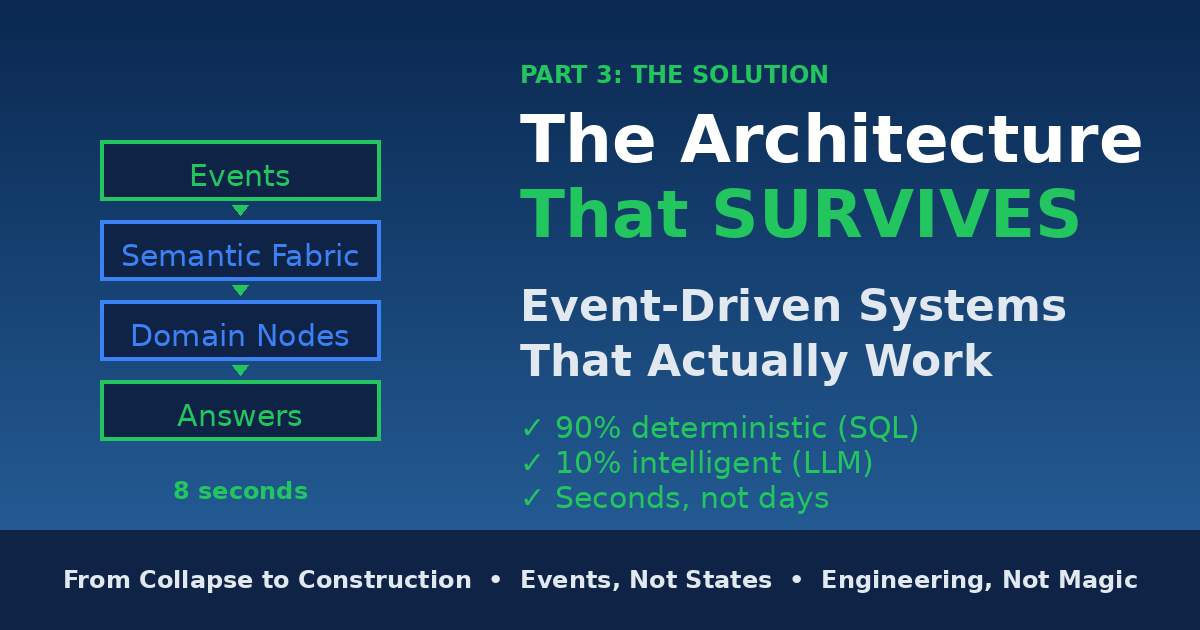

Why This Architecture Works

90% of queries are deterministic (fast SQL):

"Show me conversion funnel for last week"

"What's the cart abandonment rate by device?"

"How many orders today?"

10% of queries need synthesis (SQL + LLM):

"Why did mobile conversion drop yesterday?"

"Which customer segments are at risk?"

"What happened before high-value users churned?"

The LLM is only used for:

Understanding intent (parsing natural language)

Finding semantic patterns (when causality needed)

Synthesizing explanations (turning data into narrative)

The LLM never does math. All calculations are SQL.

Layer 4: Conversational Query Interface

Users don't write SQL. They ask questions.

How It Works

Input: Natural language query Output: Answer with evidence and confidence

Example Interaction:

Technical Implementation

Real-World Example: E-commerce Flow

Let's trace a complete customer journey through the system.

Events Generated

Query Examples

Query 1: Simple Aggregation (Deterministic)

Query 2: Funnel Analysis (Deterministic)

Query 3: Diagnostic (Hybrid - SQL + Vector Search + LLM)

Infrastructure Requirements (Real Numbers)

For a mid-sized e-commerce company (1M visitors/month, 50K orders/month):

Event Volume

~50M events/month

~60 events/second average

~300 events/second peak

Infrastructure

Event Store (PostgreSQL + TimescaleDB):

3-node cluster (primary + 2 replicas)

16 vCPU, 64GB RAM per node

2TB SSD storage (with compression)

Cost: ~$2,000/month (AWS RDS equivalent)

Event Bus (Kafka or Redpanda):

3-node cluster

8 vCPU, 32GB RAM per node

1TB SSD per node

Cost: ~$1,500/month

Domain Reasoning Nodes:

4 nodes (customer, revenue, inventory, product)

8 vCPU, 32GB RAM per node

Cost: ~$1,200/month

LLM API (Claude Sonnet):

~10K queries/month

~80% deterministic (no LLM needed)

~2K queries need LLM synthesis

Cost: ~$500/month

Vector Embeddings (OpenAI):

Batch process 50M events/month

Cost: ~$400/month

Total Infrastructure: ~$5,600/month

Compare to Traditional Stack

Traditional (Snowflake + dbt + Fivetran + Looker):

Snowflake: $3,000/month

Fivetran: $1,500/month

dbt Cloud: $500/month

Looker: $1,000/month

Data team time: 60% on maintenance

Total: $6,000/month + massive time waste

Event-driven stack: $5,600/month + 10% time on maintenance

Migration Strategy: 90-Day Proof of Concept

Week 1-2: Event Capture POC

Goal: Prove we can capture events from existing systems

Tasks:

Set up Kafka cluster

Write event producers for 1 source system (e.g., e-commerce platform)

Capture 1 event type (e.g., order_completed)

Store in PostgreSQL

Success metric: 100K events captured and stored

Week 3-4: Build First Domain Node

Goal: Answer 1 business question faster than current system

Tasks:

Build Revenue Node

Implement deterministic queries (simple aggregations)

Compare results with existing warehouse

Success metric: "Daily revenue by product" query: 0.5s (vs 3 minutes in warehouse)

Week 5-6: Add Vector Search + Semantic Layer

Goal: Enable semantic queries

Tasks:

Generate embeddings for all events

Implement vector similarity search

Test semantic queries

Success metric: "Find orders similar to this one" works accurately

Week 7-8: Conversational Interface

Goal: Natural language queries work

Tasks:

Integrate Claude API

Build intent parser

Implement answer synthesis

Test with 20 real business questions

Success metric: 90%+ accuracy on test questions

Week 9-10: Hybrid Query Execution

Goal: Handle complex "why" questions

Tasks:

Implement causal chain analysis

Build cross-domain query capability

Test diagnostic queries

Success metric: "Why did conversion drop?" answered in <10 seconds with causality

Week 11-12: Pilot with Real Users

Goal: Prove business value

Tasks:

Give access to 5 business users

Track: queries asked, time saved, satisfaction

Compare accuracy vs traditional dashboards

Success metrics:

50+ queries asked

80%+ user satisfaction

100x faster than traditional method

Demonstrate ROI

What Makes This Different

Not Data Warehouse 2.0

This isn't "a faster warehouse." It's a different paradigm:

Old: Store aggregated states, visualize, human interprets

New: Store events, synthesize answers, explain causality

Not "AI Does Everything"

90% is deterministic SQL (fast, accurate, cheap) 10% uses LLM (for understanding intent and synthesis)

The intelligence is in the architecture, not just the AI.

Not Rip-and-Replace

Your existing warehouse keeps running. New system handles new use cases. Migrate gradually as you prove value.

Not Vendor Lock-In

Built on open technologies:

PostgreSQL (open source)

Kafka (open source)

DuckDB (open source)

Claude API (anthropic.com, but swappable)

You own the infrastructure.

The Path Forward

Months 1-3: POC (prove it works)

Months 4-6: Pilot (10-20 users, 20% of queries)

Months 7-12: Scale (50% of queries)

Year 2: Primary system (80% of queries)

Year 3: Warehouse becomes archive only

You don't rip out the old system. You build the new one alongside it. You prove value before betting the company.